Load balancing Storage Made Easy File Fabric

Useful resources

About Storage Made Easy

Storage Made Easy provides an on-premises Enterprise File Fabric solution that is a storage agnostic and can be used either with a single storage back-end or multiple public/private storage systems. In the event of the latter, the File Fabric unifies the view across all access clients and implements a common control and governance policies through the use of its cloud control features.

Key benefits of load balancing

Here are a few key benefits:

- Ensures data is protected

- Helps make sure data is accessible at all times

- Enables businesses to meet growing data demands through scalability

How to load balance Storage Made Easy

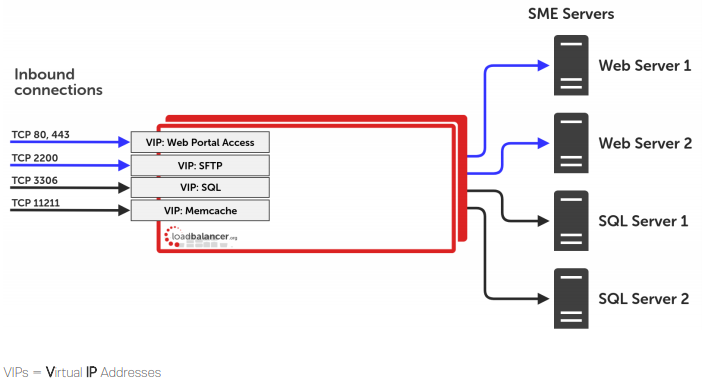

To deploy File Fabric as an HA deployment, 4 SME File Fabric instances are needed. When configured as per the Storage Made Easy guides, the topology will be as follows:

- 2 SME Web servers

- 2 SME SQL servers

Load balancing File Fabric requires source IP address affinity. This is true for both the layer 4 and layer 7 based load balancing methods described the full Deployment Guide.

To provide load balancing and HA for File Fabric, the following VIPs are required:

- Web portal access

- SQL

- Memcache

- SFTP

The following table shows the ports that are load balanced:

| Port | Protocols | Use |

| 80 | TCP/HTTP | Web Portal Access over HTTP |

| 443 | TCP/HTTPS | Web Portal Access over HTTPS |

| 3306 | TCP/SQL | SQL Service |

| 2200 | TCP/SFTP | SFTP Service |

| 12211 | TCP/Memcache | Memcache Service |

Deployment Concept

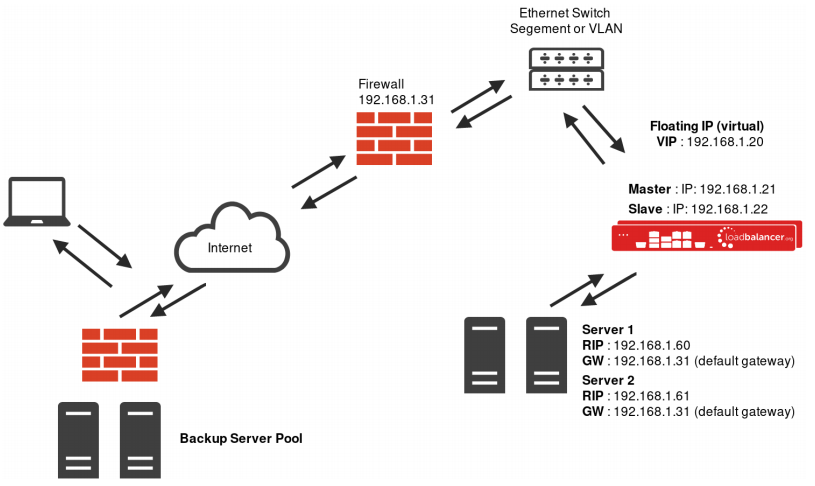

Note: The load balancer can be deployed as a single unit, although Loadbalancer.org recommends a clustered pair for resilience & high availability. Please refer to section 3 in the appendix on page 35 for more details on configuring a clustered pair.

Deployment Methods

The load balancer can be deployed in 4 fundamental ways: Layer 4 DR mode, Layer 4 NAT mode, Layer 4 SNAT mode, and Layer 7 SNAT mode. For File Fabric, using a combination of layer 4 DR mode and layer 7 SNAT mode is recommended. It it also possible to only use layer 7 SNAT mode, however the performance of this set up is not as great and client source IP addresses are not passed through to the SME servers on the back end. Both of these setups are fully described in the Deployment Guide.

Layer 4 DR Mode

One-arm direct routing (DR) mode is a very high performance solution that requires little change to your existing infrastructure.

- DR mode works by changing the destination MAC address of the incoming packet to match the selected Real Server on the fly which is very fast

- When the packet reaches the Real Server it expects the Real Server to own the Virtual Services IP address (VIP). This means that you need to ensure that the Real Server (and the load balanced application) respond to both the Real Servers own IP address and the VIP

- The Real Server should not respond to ARP requests for the VIP. Only the load balancer should do this. Configuring the Real Servers in this way is referred to as Solving the ARP Problem. Please refer to the full Deployment Guide for more information.

- On average, DR mode is 8 times quicker than NAT for HTTP, 50 times quicker for Terminal Services and much, much faster for streaming media or FTP

- The load balancer must have an Interface in the same subnet as the Real Servers to ensure layer 2 connectivity required for DR mode to work

- The VIP can be brought up on the same subnet as the Real Servers, or on a different subnet provided that the load balancer has an interface in that subnet

- Port translation is not possible in DR mode i.e. having a different RIP port than the VIP port

- DR mode is transparent, i.e. the Real Server will see the source IP address of the client

Layer 7 SNAT Mode

Layer 7 SNAT mode uses a proxy (HAProxy) at the application layer. Inbound requests are terminated on the load balancer, and HAProxy generates a new request to the chosen Real Server. As a result, Layer 7 is a slower technique than DR or NAT mode at Layer 4. Layer 7 is typically chosen when either enhanced options such as SSL termination, cookie based persistence, URL rewriting, header insertion/deletion etc. are required, or when the network topology prohibits the use of the layer 4 methods.

This mode can be deployed in a one-arm or two-arm configuration and does not require any changes to the Real Servers.

However, since the load balancer is acting as a full proxy it doesn’t have the same raw throughput as the layer 4 methods. The load balancer proxies the application traffic to the servers so that the source of all traffic becomes the load balancer.

- SNAT mode is a full proxy and therefore load balanced Real Servers do not need to be changed in any way

- Because SNAT mode is a full proxy any server in the cluster can be on any accessible subnet including across the Internet or WAN

- SNAT mode is not transparent by default, i.e. the Real Servers will not see the source IP address of the client, they will see the load balancers own IP address by default, or any other local appliance IP address if preferred (e.g. the VIP address), this can be configured per layer 7 VIP. If required, the clients IP address can be passed through either by enabling TProxy on the load balancer, or for HTTP, using X-forwarded-For headers. Please refer the administration manual for more details.

- SNAT mode can be deployed using either a 1-arm or 2-arm configuration

Our Recommendation

Where possible, we recommend that the combination of Layer 4 Direct Routing (DR) mode and Layer 7 SNAT mode is used. This mode offers the best possible performance for the DR mode services, since replies go directly from the Real Servers to the client and not via the load balancer. It’s also relatively simple to implement. Ultimately, the final choice does depend on your specific requirements and infrastructure.

If DR mode cannot be used, for example if the real servers are located in remote routed networks, then SNAT mode is recommended. SNAT mode is also recommended if it is not possible to make network adaptor changes to the SME servers, for example if you do not own or do not control the infrastructure.

If the load balancer is deployed in AWS, Azure, or GCP, layer 7 SNAT mode must be used as layer 4 direct routing is not currently possible on these platforms.

deployment guide

Storage Made Easy File Fabric Deployment Guide

Read deployment guidemanual

Administration manual v8

Read manualblogs

Load balancing: the driving force behind successful object storage

Read blog

Things to keep in mind while choosing a load balancer for your object storage system

Read blog

NAS vs Object Storage: what's best for unstructured data?

Read blog

How load balancing helps to store and protect petabytes of data

Read blogwhite paper

Load balancing: the lifeblood in resilient Object Storage

Read white paper